If you are building a homelab, you will hit this question fast: should you run a service in a VM, or in a container?

The short answer is that both are valid, but they solve different problems. If you treat them as interchangeable, you usually end up with either unnecessary overhead or unnecessary risk.

In this post you will get:

- A mental model you can remember

- The isolation and security differences that actually matter

- A simple decision guide (plus a beginner-friendly default setup for Proxmox)

If you are brand new to self-hosting, browsing the Self-Hosting category first can help you see the common building blocks.

The simplest mental model (without the buzzwords)

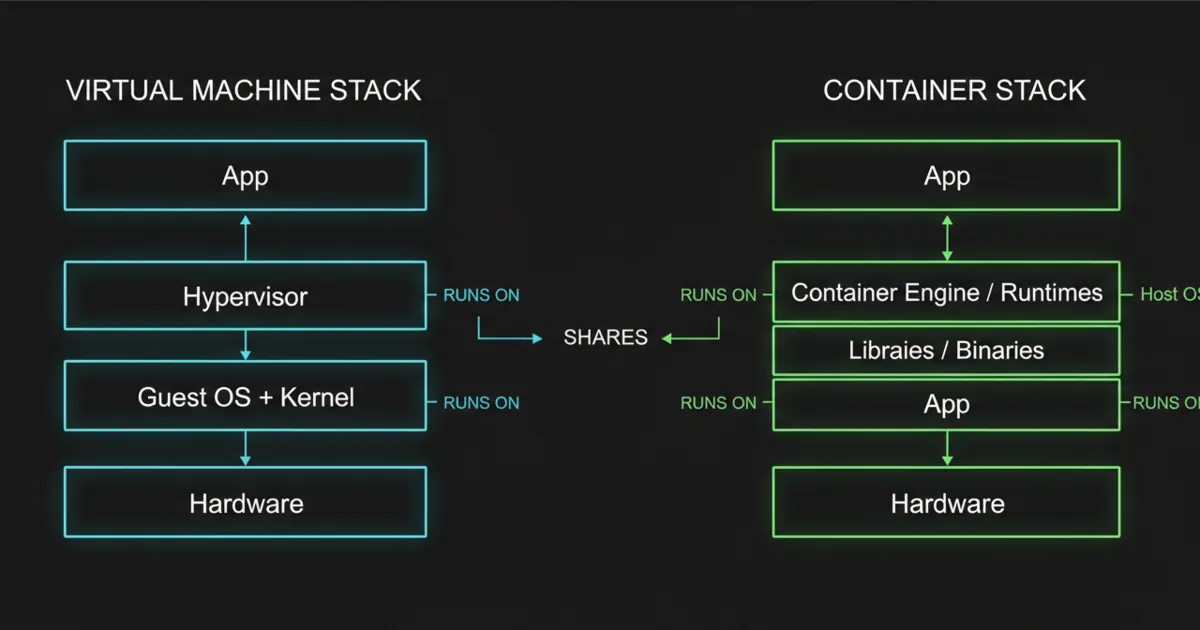

A virtual machine (VM) virtualizes hardware. In practice, that means the VM behaves like its own computer: it has its own virtual CPU, virtual RAM, virtual disk, and it runs its own OS with its own kernel.

A container isolates processes using features of the host OS. The key detail is that containers share the host kernel. You are not booting a second kernel for each container.

That kernel-sharing point explains most of the trade-offs you see in real life.

Isolation and security: what boundary are you actually getting?

This is where homelab choices become real, because most homelabs eventually run a mix of trusted internal tools, experiments, and internet-facing services.

With a VM

A VM is generally a stronger isolation boundary because the workload is separated at the hypervisor layer.

If a service inside the VM gets compromised, the attacker still has to break out of the VM boundary to reach the host.

It is not perfect security, but it is a clean boundary that matches how people naturally think about separating systems.

With containers

Containers isolate processes, but they share the host kernel.

That changes the risk model. You can absolutely run stable services in containers, but you should avoid assuming “container” means the same boundary as a VM.

A good beginner rule is:

- If you would be uncomfortable giving a service root on your server, think twice about giving it a path to the host kernel through a container setup.

This does not mean never use containers. It means use containers intentionally.

Performance and overhead: why containers feel fast

Containers tend to be lightweight:

- No separate OS kernel per workload

- Smaller images (depending on how you build them)

- Fast start and stop behavior

VMs tend to carry more overhead because each VM includes an OS layer and usually more disk and RAM expectations.

In a homelab, the performance difference matters most when you are running many small services, your server has limited RAM, or you want quick deploy and rollback cycles.

If you are running a handful of services total and you value clean boundaries and simple troubleshooting, a couple of VMs can be the calmer choice.

Operational differences that matter more than raw speed

A lot of VM vs container debates ignore the boring parts. In a homelab, the boring parts are the whole game.

Updates and breakage

In a VM, you update the OS like a normal server. Services inside can be managed with systemd, packages, or Docker.

With containers, you typically update by pulling a new image and recreating the container.

Containers encourage a replace-not-repair workflow. That is great when you have good config plus data separation, and painful when you do not.

Where state lives (this is the container make-or-break point)

For containers, you want a clean split:

- Container image: the app

- Volumes or bind mounts: the data

If you blur that line, upgrades turn into “please do not break”.

Networking mental load

You can do clean networking in both models, but the day-to-day experience differs.

VMs feel like a server on your LAN. Containers feel like an app behind a networking abstraction.

Beginners often find VM networking easier to reason about when they are also learning DNS, reverse proxies, and firewall rules.

Backups and restores

You want to be able to answer this without guessing: If this box dies, how do I restore services and data?

VM-heavy setups often do well here because you can back up the VM disk and it is obvious what you are backing up.

Container-heavy setups can be excellent too, but only if your volumes are well-organized and you have a tested restore path.

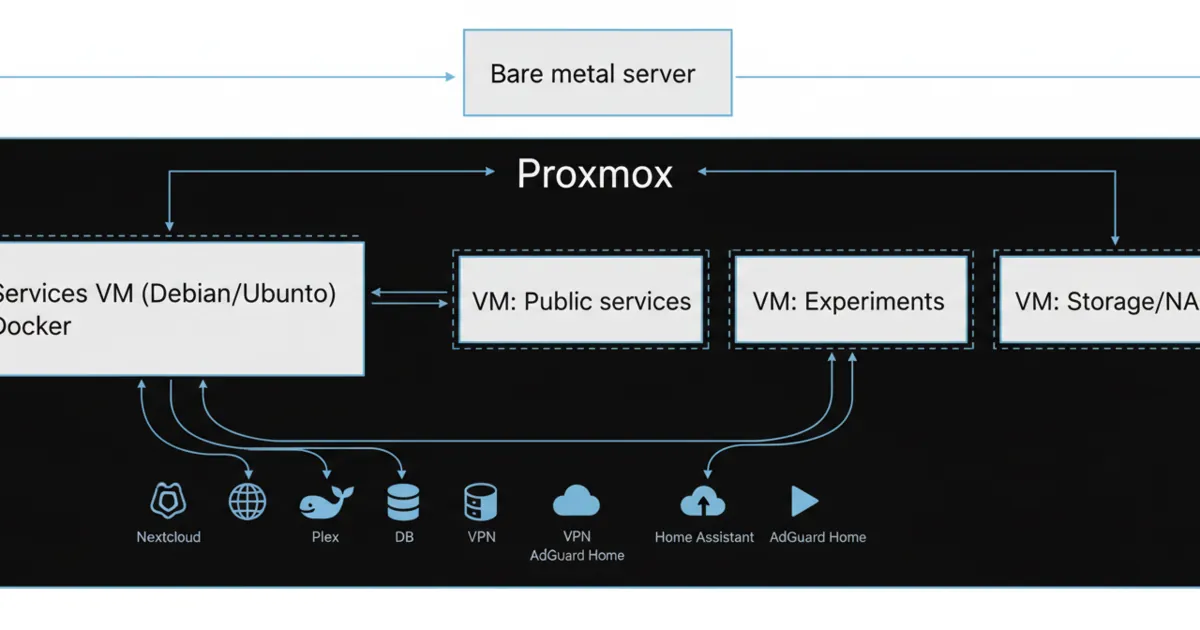

How this maps to Proxmox, LXC, and Docker

Homelab reality is that you are usually choosing between specific options, not abstract definitions.

- Proxmox VMs use KVM virtualization (your true VMs).

- Proxmox LXC are system containers (a full OS userland, but sharing the host kernel).

- Docker containers are application containers (the app packaged into an image, typically with its dependencies).

A common beginner-friendly setup is:

- Proxmox as the base

- One Services VM (Debian or Ubuntu) that runs Docker for most apps

- A few dedicated VMs for things that deserve stronger isolation

This gives you VM boundaries where it matters and container convenience where it helps.

If you want to explore the ecosystem, the Proxmox category and Docker category are good starting points.

A practical decision guide (use this, not vibes)

Pick a VM when:

- You want a hard boundary between workloads

- You are running something internet-facing and you want to contain blast radius

- You need a different OS or kernel behavior

- You want normal server simplicity

- You want straightforward hypervisor snapshots and backups

Pick containers when:

- You are running many small services

- You want easy deploy and rollback by recreating containers

- You want consistent packaging across machines

- You are comfortable with config plus volumes as the source of truth

- You want higher density on limited hardware

Pick a hybrid setup (very common) when you want VM boundaries for safety and sanity, but you still want container ergonomics for apps.

A strong default for most beginners:

- Put Docker in one VM.

- Put your core internal services there.

- Use separate VMs for anything you expose to the public internet, or anything you experiment with heavily.

Common mistakes (and how to avoid them)

Mistake 1: treating containers like mini-VMs

If you are using containers, be explicit about what you are isolating: processes, not kernels.

If you need a clearer boundary, use a VM.

Mistake 2: mixing unrelated services with no rollback plan

If a container host becomes the everything box, updates get scary.

Group services by purpose, keep configs in a clean folder structure, and keep a simple restore checklist.

Mistake 3: letting data sprawl everywhere

Put data in predictable paths. Name volumes clearly. Make it obvious what needs to be backed up.

Mistake 4: optimizing for efficiency instead of recoverability

A homelab is not a benchmark. It is a system you maintain.

Choose the setup you can restore on a bad day.

FAQ

Are containers faster than VMs?

Containers are often lighter-weight because they share the host kernel, but “faster” depends on workload.

The bigger difference is operational: containers are easier to redeploy and scale as small units.

Is LXC the same as Docker?

Not exactly. LXC are system containers (OS userland without a separate kernel). Docker is typically used for application containers.

In a homelab, both are containers, but the workflows and expectations differ.

Should I run Docker directly on Proxmox?

Many people avoid it because it mixes hypervisor host concerns with app host concerns.

A common approach is running Docker inside a dedicated VM so the Proxmox host stays clean.

What is the safest default for a beginner?

A services VM running Docker, plus separate VMs for anything risky or public-facing, is a safe and maintainable baseline.

What if I only have one small machine?

You can still use the same pattern. Even one VM for services can help, and you can start with containers inside that VM.

Next steps

Pick one service you plan to run this week and decide its boundary upfront.

If it is public-facing or experimental, put it in its own VM. If it is a stable internal app, put it in your services VM as a container and store its data in a clearly named volume you can back up.